Henryk Michalewski

Bio

Prior to 2015, my research focused on pure mathematics and theoretical computer science, covering logic, foundations, game theory, and optimization. I subsequently pivoted to work exclusively on machine learning. Throughout my career, I have been fortunate to interact with leadership that supported new research directions and collaborate with exceptional engineers and researchers who made it possible to pursue these directions.

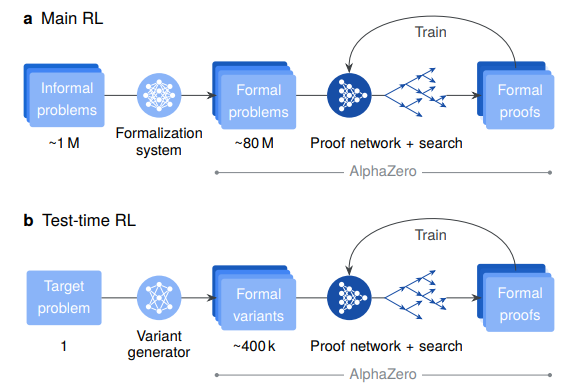

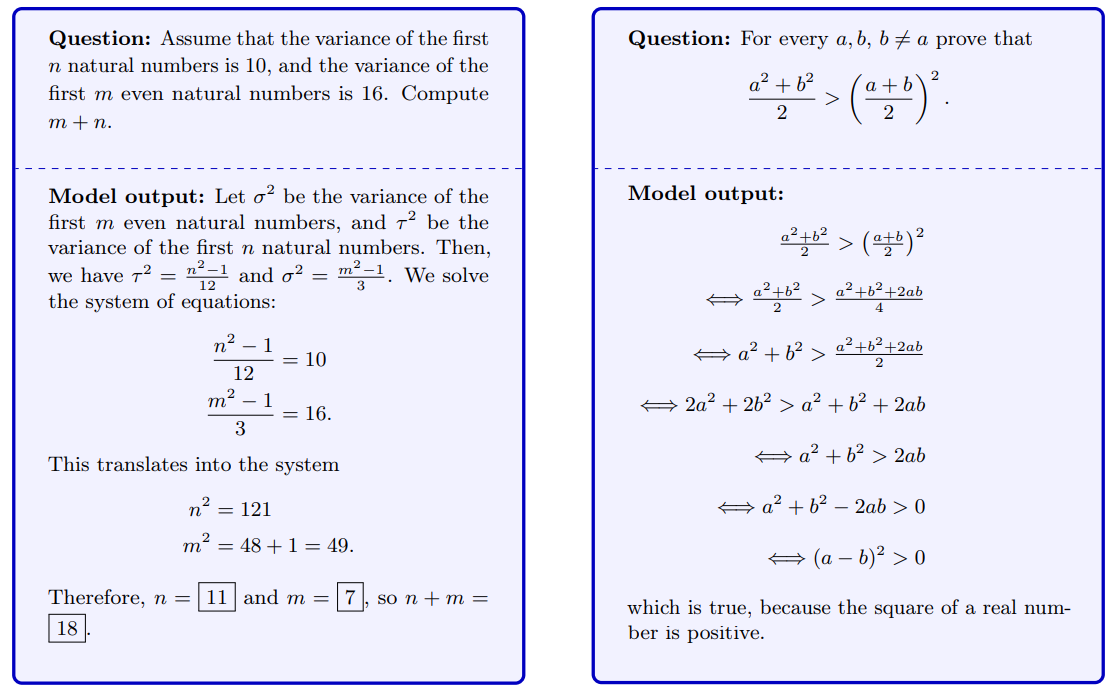

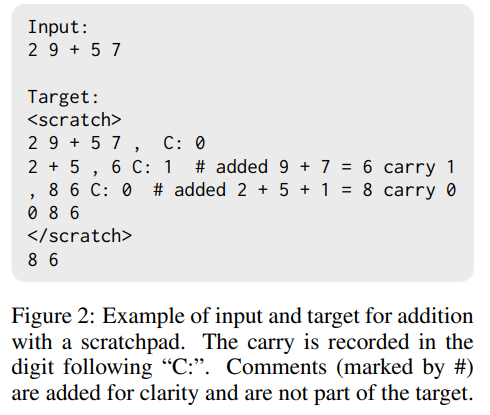

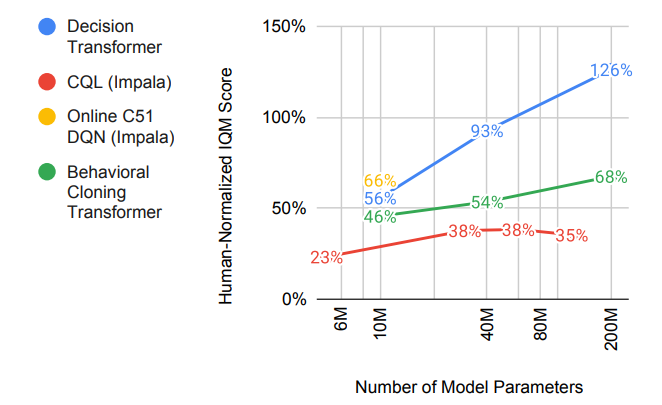

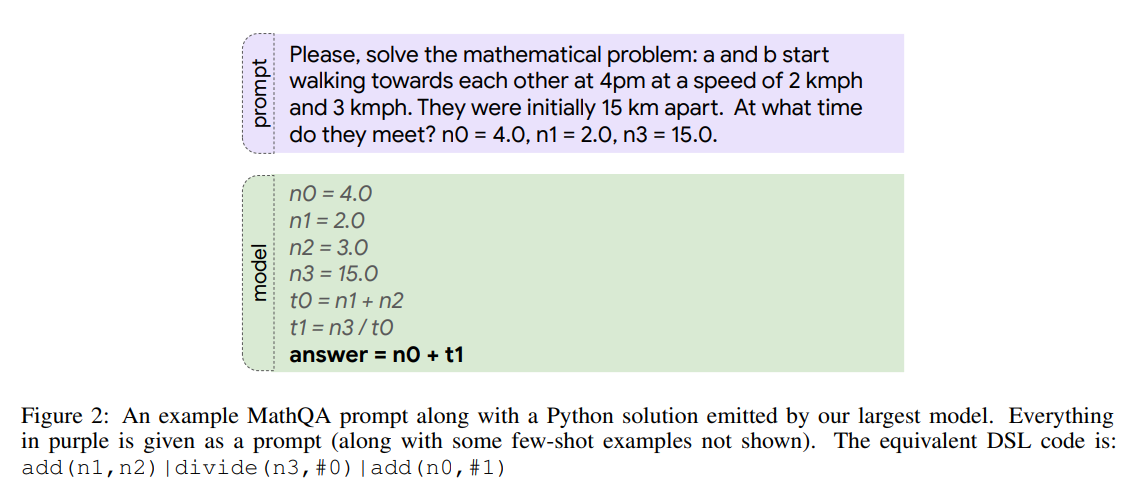

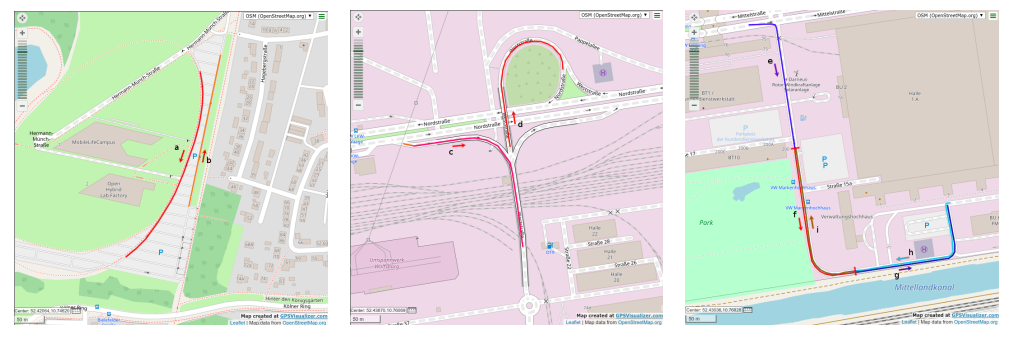

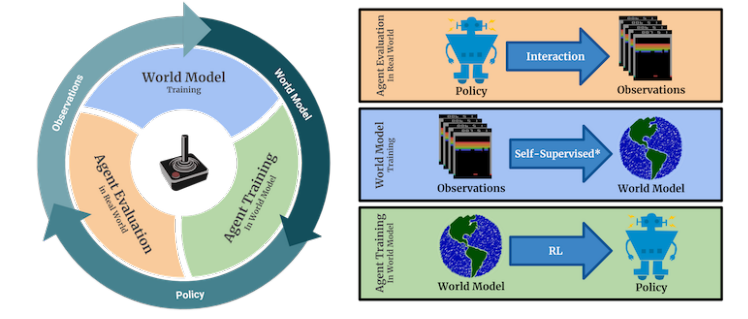

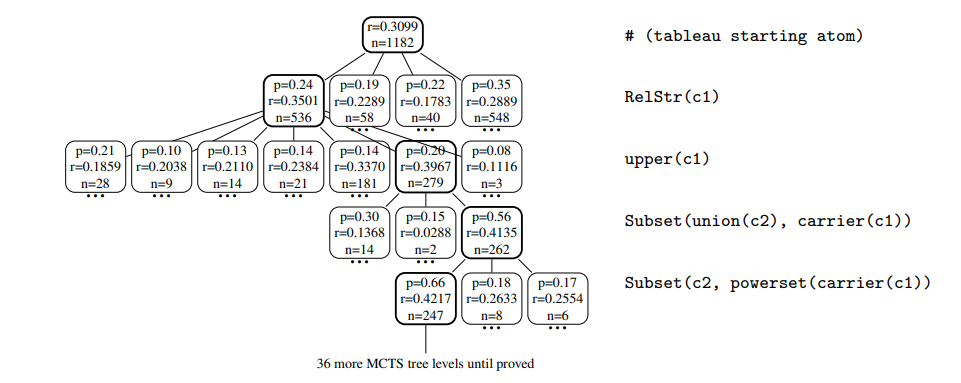

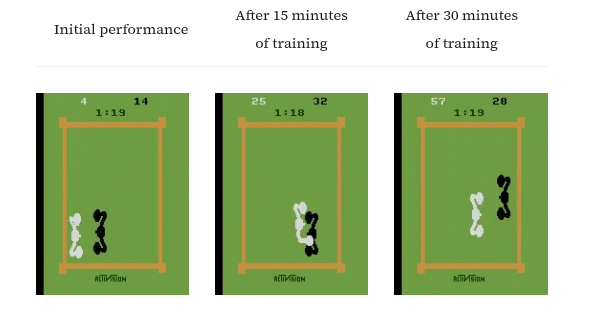

Before joining Google in 2019, I worked on reinforcement learning for theorem proving, early deep RL scaling experiments with Intel, a sim2real project with Volkswagen, and model-based RL. At Google, I have contributed to PaLM, early program synthesis work with LLMs, Scratchpad, and Minerva. More recently, I worked on the math-specialized model presented in the Gemini 1.5 report, Big Sleep, AlphaProof, and all iterations of the main Gemini models. Leveraging Google’s infrastructure, I have conducted thousands of experiments and submitted over 1,000 pull requests—roughly half of which were to the Gemini codebase.

Work Engagements

Open Source Contributions

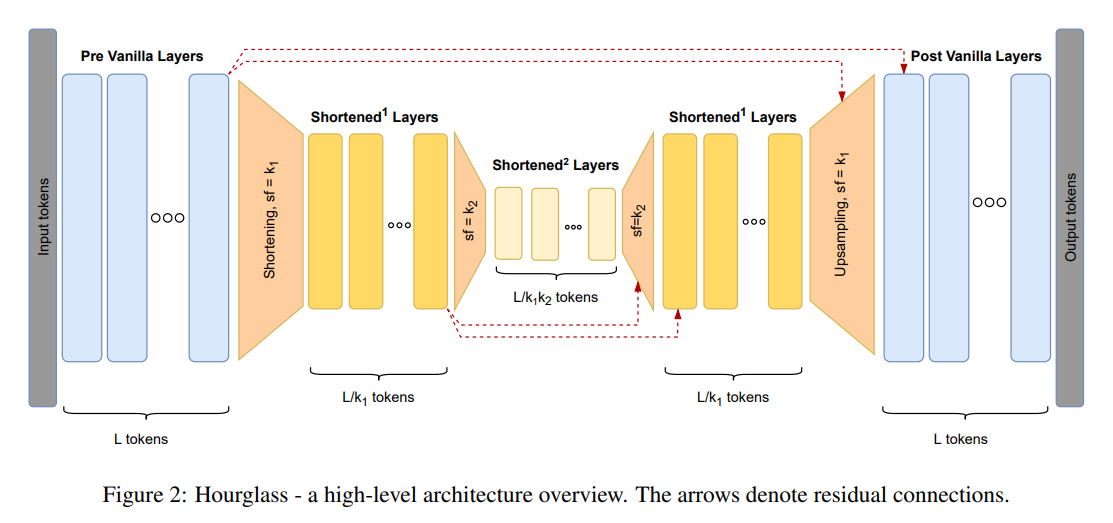

- Trax — contributions to sequence modeling, training pipelines, and reasoning-focused components.

- Formal Putnam-like Benchmark — co-developer of an olympiad-level mathematical reasoning evaluation suite.

- Eval-Hub — contributor to a unified evaluation framework for LLM reasoning, code generation, and multimodal tasks.

Patents based on the Scratchpad paper contributions

-

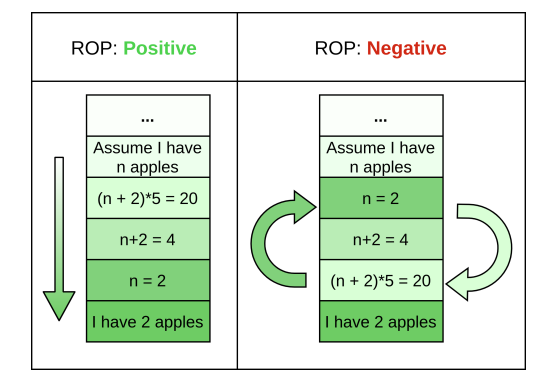

Prompting Machine-Learned Models Using Chains of Thought — chain-of-thought prompting and consistency-based selection of model outputs for improved reasoning robustness.

-

Using Chains of Thought to Prompt Machine-Learned Models Pre-Trained on Diversified Objectives — construction of instructive query–answer–reasoning triples for steering large pre-trained models via chain-of-thought prompts.

Education

Mentoring of Students

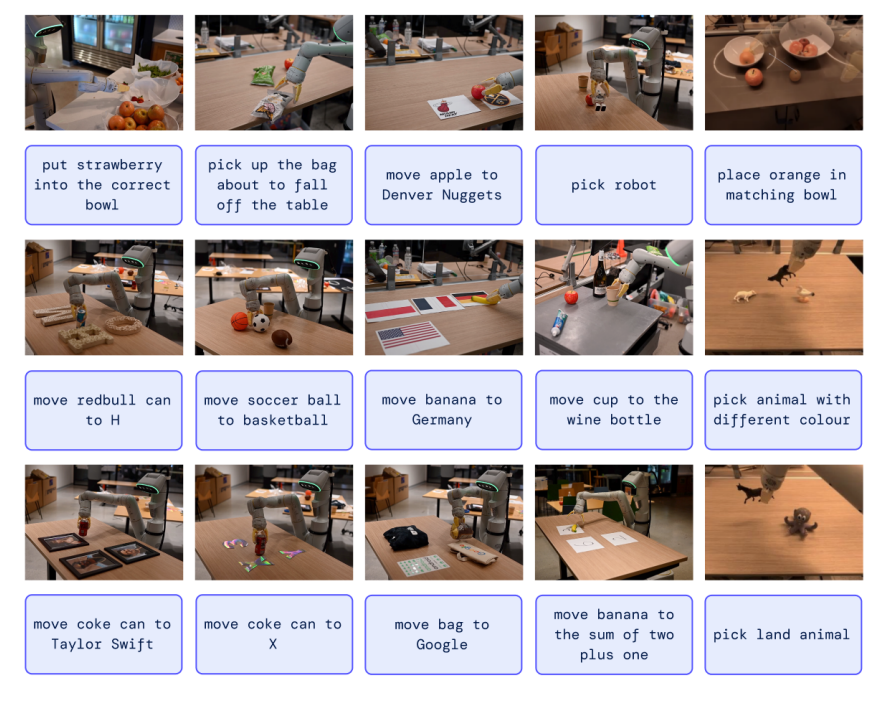

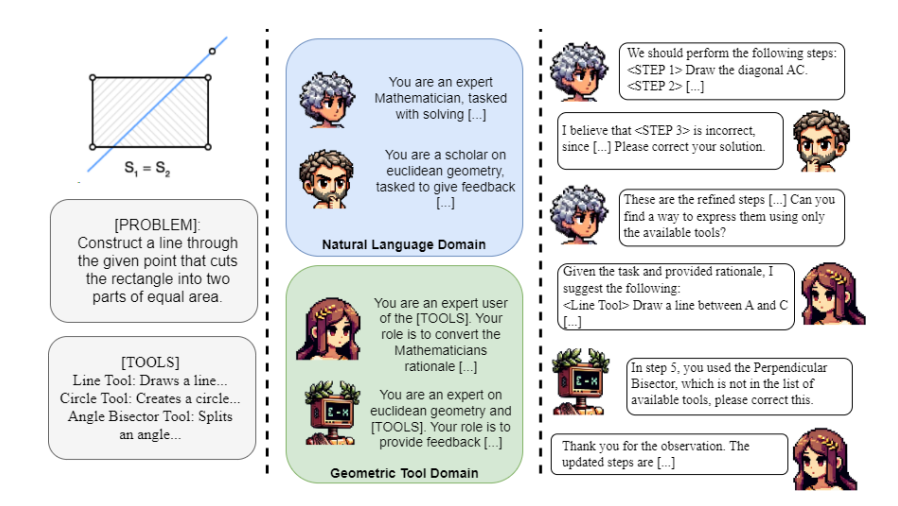

Spyridon Mouselinos, 2021–2025, Ph.D. project

Towards Visual Reasoning,

co-supervised with Mateusz Malinowski (Google DeepMind).

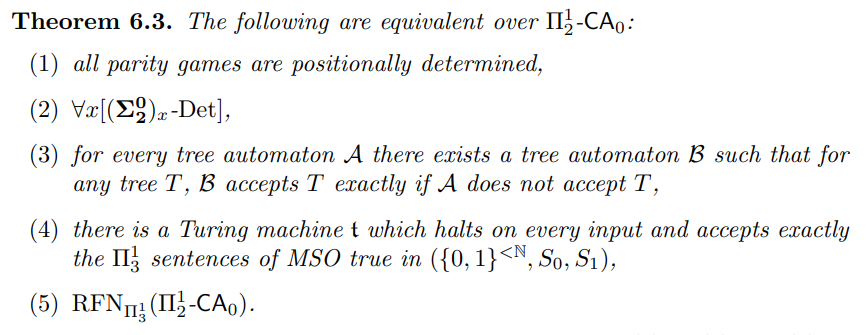

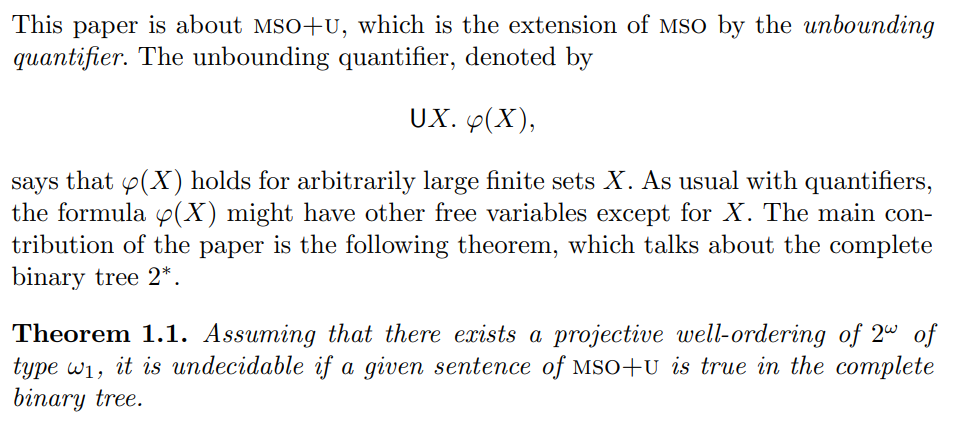

Cécilia Pradic, 2014–2019, PhD thesis

Some proof-theoretical approaches to Monadic Second-Order logic,

co-supervised with Colin Riba (ENS Lyon).

Powered by Jekyll and Minimal Light theme.